Introduction & Overview

Anthropic's Responsible Scaling Policy

Version 1.0 Effective September 19, 2023

As AI models become more capable, Anthropic believes that they will create major economic and social value, but will also present increasingly severe risks. With this document we are making a public commitment to a concrete framework for managing these risks--one that will evolve over time, but that seeks to establish clear expectations and accountability in its initial form.

We focus these commitments specifically on catastrophic risks1, defined as large-scale devastation (for example, thousands of deaths or hundreds of billions of dollars in damage) that is directly caused by an AI model and wouldn't have occurred without it. AI represents a spectrum of risks and these commitments are designed to deal with the more extreme end of this spectrum. This work is complementary to our work on other areas of AI safety, including mitigating harms like misinformation, bias, and toxicity, studying societal impacts, protecting customer privacy, building robust and reliable systems, and developing techniques like Constitutional AI for alignment with human values.

Note that these commitments primarily relate to internal testing and development practices for future more powerful versions of Claude. They do not alter current uses of Claude or any of Anthropic's present offerings (beyond safety practices we already engage in).

Our commitments are designed in the spirit of the Responsible Scaling Policy (RSP) framework being developed by Paul Christiano and ARC Evals, as well as emerging government policy proposals on responsible AI development in the UK, EU, and US. We thank ARC Evals for substantial advice and collaboration on the development of our commitments.

Executive Summary

Responsible Scaling Policy, Version 2.2, Effective May 14, 2025. Supplementary info available at www.anthropic.com/rsp-updates.

In September 2023, we released our Responsible Scaling Policy (RSP), a public commitment not to train or deploy models capable of causing catastrophic harm unless we have implemented safety and security measures that will keep risks below acceptable levels. We are now updating our RSP to account for the lessons we've learned over the last year. This updated policy reflects our view that risk governance in this rapidly evolving domain should be proportional, iterative, and exportable.

BackgroundAI Safety Level Standards (ASL Standards) are a set of technical and operational measures for safely training and deploying frontier AI models. These currently fall into two categories: Deployment Standards and Security Standards. As model capabilities increase, so will the need for stronger safeguards, which are captured in successively higher ASL Standards. At present, all of our models must meet the ASL-2 Deployment and Security Standards. To determine when a model has become sufficiently advanced such that its deployment and security measures should be strengthened, we use the concepts of Capability Thresholds and Required Safeguards. A Capability Threshold tells us when we need to upgrade our protections, and the corresponding Required Safeguards tell us what standard should apply.

Capability Thresholds and Required SafeguardsThe Required Safeguards for each Capability Threshold are intended to mitigate risk to acceptable levels. This update to our RSP provides specifications for Capabilities Thresholds related to Chemical, Biological, Radiological, and Nuclear (CBRN) weapons and Autonomous AI Research and Development (AI R&D) and identifies the corresponding Required Safeguards.

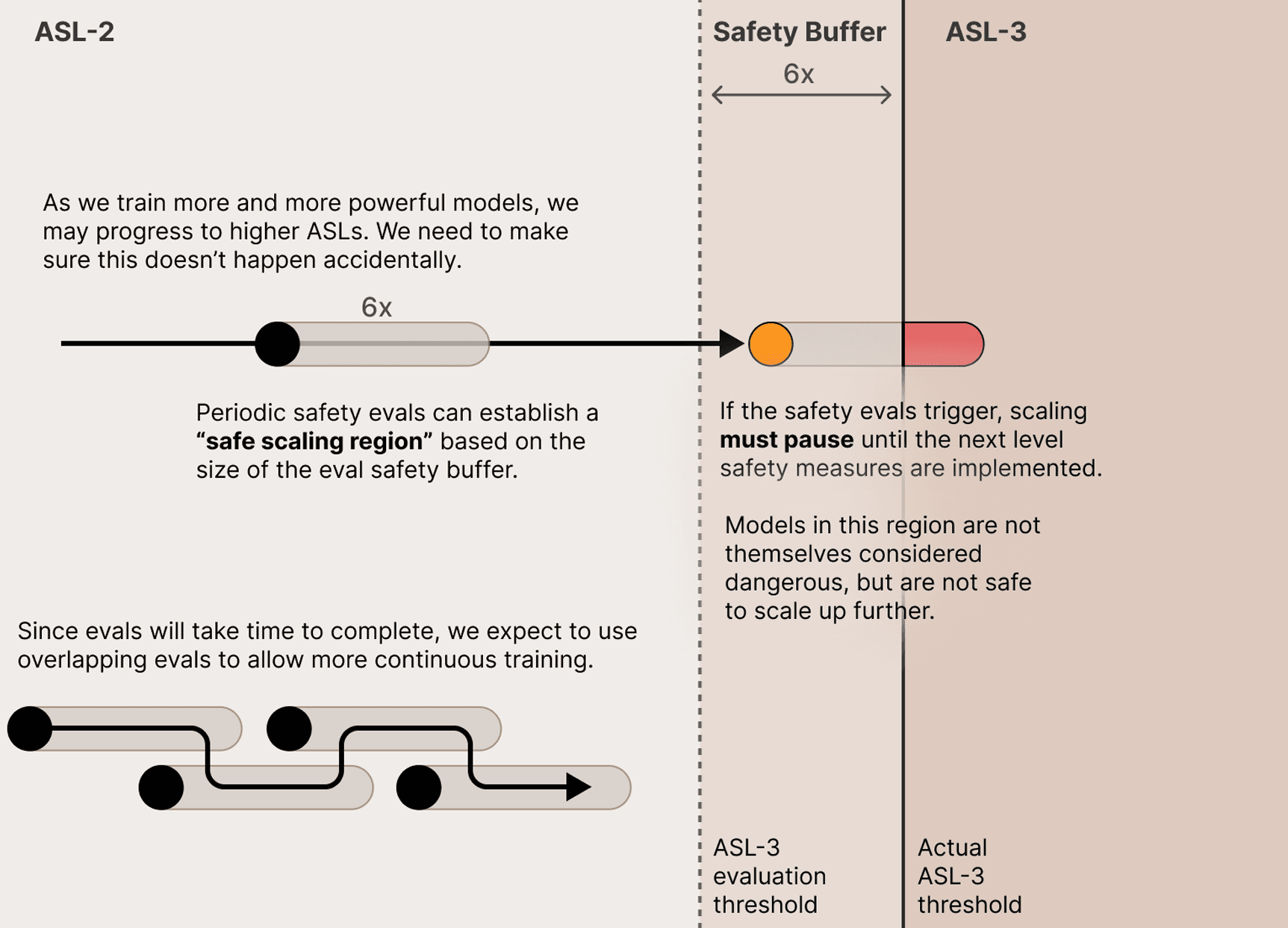

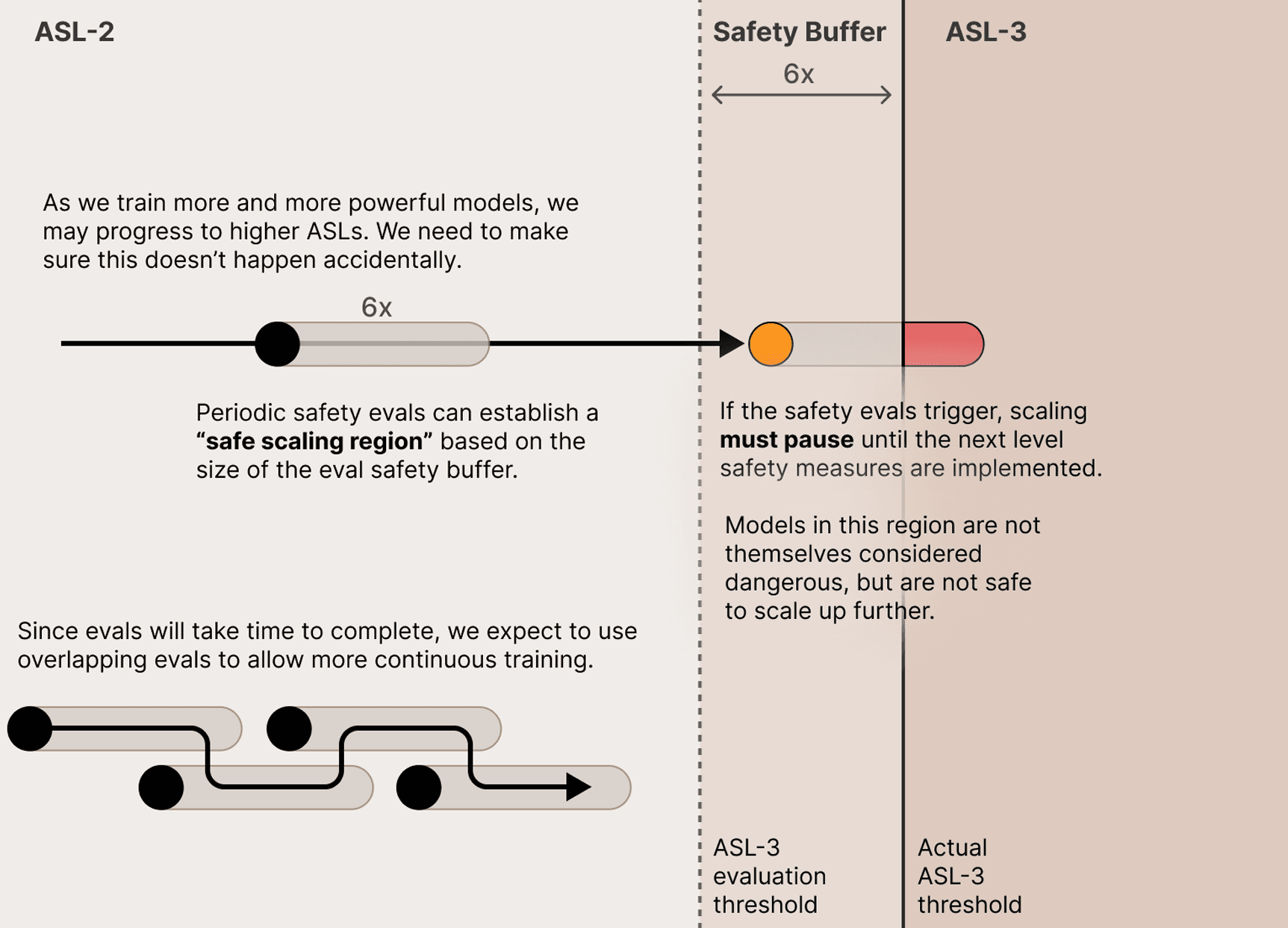

Capability assessmentWe will routinely test models to determine whether their capabilities fall sufficiently far below the Capability Thresholds such that the ASL-2 Standard remains appropriate. We will first conduct preliminary assessments to determine whether a more comprehensive evaluation is needed. For models requiring comprehensive testing, we will assess whether the model is unlikely to reach any relevant Capability Thresholds absent surprising advances in widely accessible post-training enhancements. If, after the comprehensive testing, we determine that the model is sufficiently below the relevant Capability Thresholds, then we will continue to apply the ASL-2 Standard. If, however, we are unable to make the required showing, we will act as though the model has surpassed the Capability Threshold. This means that we will both upgrade to the ASL-3 Required Safeguards and conduct a follow-up capability assessment to confirm that the ASL-4 Standard is not necessary.

Safeguards assessmentTo determine whether the measures we have adopted satisfy the ASL-3 Required Safeguards, we will conduct a safeguards assessment. For the ASL-3 Deployment Standard, we will evaluate whether it is robust to persistent attempts to misuse the capability in question. For the ASL-3 Security Standard, we will evaluate whether it is highly protected against non-state attackers attempting to steal model weights. If we determine that we have met the ASL-3 Required Safeguards, then we will proceed to deployment, provided we have also conducted a follow-up capability assessment.

Follow-up capability assessmentIn parallel with upgrading a model to the ASL-3 Required Safeguards, we will conduct a follow-up capability assessment to confirm that further safeguards are not necessary.

Deployment and scaling outcomesWe may deploy or store a model if either of the following criteria are met: (1) the model's capabilities are sufficiently far away from the existing Capability Thresholds, making the current ASL-2 Standard appropriate; or (2) the model's capabilities have surpassed the existing Capabilities Threshold, but we have implemented the ASL-3 Required Safeguards and conducted the follow-up capability assessment. In any scenario where we determine that a model requires ASL-3 Required Safeguards but we are unable to implement them immediately, we will act promptly to reduce interim risk to acceptable levels until the ASL-3 Required Safeguards are in place.

Governance and transparencyTo facilitate the effective implementation of this policy across the company, we commit to several internal governance measures, including maintaining the position of Responsible Scaling Officer, establishing a process through which Anthropic staff may anonymously notify the Responsible Scaling Officer of any potential instances of noncompliance, and developing internal safety procedures for incident scenarios. To advance the public dialogue on the regulation of frontier AI model risks and to enable examination of our actions, we will also publicly release key materials related to the evaluation and deployment of our models with sensitive information removed and solicit input from external experts in relevant domains.

Introduction

As frontier AI models advance, we believe they will bring about transformative benefits for our society and economy. AI could accelerate scientific discoveries, revolutionize healthcare, enhance our education system, and create entirely new domains for human creativity and innovation. Frontier AI models also, however, present new challenges and risks that warrant careful study and effective safeguards. In September 2023, we released our Responsible Scaling Policy (RSP), a first-of-its-kind public commitment not to train or deploy models capable of causing catastrophic harm unless we have implemented safety and security measures that will keep risks below acceptable levels. Our RSP serves several purposes: it is an internal operating procedure for investigating and mitigating these risks and helps inform the public of our plans and commitments. We also hope it will serve as a prototype for other companies looking to adopt similar frameworks and, potentially, inform regulators about possible best practices.

We are now updating our RSP to account for the lessons we've learned over the last year. This policy reflects our view that risk governance in this rapidly evolving domain should be proportional, iterative, and exportable.

First, our approach to risk should be proportional. Central to our policy is the concept of AI Safety Level Standards: technical and operational standards for safely training and deploying frontier models that correspond with a particular level of risk. By implementing safeguards that are proportional to the nature and extent of an AI model's risks, we can balance innovation with safety, maintaining rigorous protections without unnecessarily hindering progress. This approach also enables us to allocate resources efficiently, focusing our most stringent safeguards on the models that pose the greater risk, while affording more flexibility for lower-risk systems.

Second, our approach to risk should be iterative. Since the frontier of AI is rapidly evolving, we cannot anticipate what safety and security measures will be appropriate for models far beyond the current frontier. We will thus regularly measure the capability of our models and adjust our safeguards accordingly. Further, we will continue to research potential risks and next-generation mitigation techniques. And, at the highest level of generality, we will look for opportunities to improve and strengthen our overarching risk management framework.

Third, our approach to risk should be exportable. To demonstrate that it is possible to balance innovation with safety, we must put forward our proof of concept: a pragmatic, flexible, and scalable approach to risk governance. By sharing our approach externally, we aim to set a new industry standard that encourages widespread adoption of similar frameworks. In the long term, we hope that our policy may offer relevant insights for regulation. In the meantime, we will continue to share our findings with policymakers.

Although this policy focuses on catastrophic risks, they are not the only risks that we consider important. Our Usage Policy sets forth our standards for the use of our products, including prohibitions on using our models to spread misinformation, incite violence or hateful behavior, or engage in fraudulent or abusive practices, and we continually refine our technical measures for enforcing our trust and safety standards at scale. Further, we conduct research to understand the broader societal impacts of our models. Our Responsible Scaling Policy complements our work in these areas, contributing to our understanding of current and potential risks.

At Anthropic, we are committed to developing AI responsibly and transparently. Since our founding, we have recognized the importance of proactively addressing potential risks as we push the boundaries of AI capability and of clearly communicating about the nature and extent of those risks. We look forward to continuing to refine our approach to risk governance and to collaborating with stakeholders across the AI ecosystem.

This policy is designed in the spirit of the Responsible Scaling Policy (RSP) framework introduced by the non-profit AI safety organization METR, as well as emerging government policy proposals in the UK, EU, and US. This policy also helps satisfy our Voluntary White House Commitments (2023) and Frontier AI Safety Commitments (2024). We extend our sincere gratitude to the many external groups that provided invaluable guidance on the development and refinement of our Responsible Scaling Policy. We actively welcome feedback on our policy and suggestions for improvement from other entities engaged in frontier AI risk evaluations or safety and security standards. To submit your feedback or suggestions, please contact us at rsp@anthropic.com.

Introduction

Our Responsible Scaling Policy (RSP) is our voluntary framework for managing catastrophic risks from advanced AI systems. It establishes how we identify and evaluate risks, how we make decisions about AI development and deployment, and, from the perspective of the world at large, how we aim to make sure that the benefits of our models exceed their costs. We have always intended for our RSP to be a living document. We will continually update the RSP as we learn more about AI capabilities and risks, develop and refine technical safety measures, and gain more experience navigating an ecosystem in which the risks to society depend on the actions of many developers.

The major components of this third iteration are as follows:

Our recommendations for industry-wide safetyOur recommendations for industry-wide safety outline what it would take, at an industry-wide level, to keep catastrophic risks reliably low through a period of rapid advances in AI capabilities. We lay this out in a table that maps capability thresholds to the mitigations we believe they call for. We also include our planned mitigations as a company, which are drawn from other sections of this policy and associated artifacts.

This approach represents a change from our previous RSP, driven by a collective action problem. The overall level of catastrophic risk from AI depends on the actions of multiple AI developers, not just one. Our previous RSP committed to implementing mitigations that would reduce our models' absolute risk levels to acceptable levels, without regard to whether other frontier AI developers would do the same. But from a societal perspective, what matters is the risk to the ecosystem as a whole. If one AI developer paused development to implement safety measures while others moved forward training and deploying AI systems without strong mitigations, that could result in a world that is less safe--the developers with the weakest protections would set the pace, and responsible developers would lose their ability to do safety research and advance the public benefit. Although this situation has not yet arisen, it looks likely enough that we want to prepare for it.

We now separate our plans as a company--those which we expect to achieve regardless of what any other company does--from our more ambitious industry-wide recommendations. We aspire to advance the latter through a mixture of example-setting, addressing unsolved technical problems, advocacy through industry groups, and policy advocacy. But we cannot commit to following them unilaterally.

Frontier Safety RoadmapsFrontier Safety Roadmaps are a new requirement under our RSP. These will describe our concrete plans for making progress across Security, Alignment, Safeguards, and Policy. Goals described in the Roadmaps are intended to be ambitious, yet achievable--providing the kind of forcing function that we consider to be a past success of our RSP. These are not hard commitments but rather public goals against which we will openly grade our progress.

Risk ReportsRisk Reports are another new requirement. Risk Reports will provide detailed information on the safety profile of our models at the time of publication. They will go beyond describing model capabilities, addressing our thinking on how capabilities, threat models (the specific ways that models might pose threats), and active risk mitigations fit together, providing an assessment of the overall level of risk. These reports will reflect our reasoning as to whether we believe the risks of training or deploying our models are justified by their corresponding benefits to the world. They will be published online, with some redactions to protect sensitive details about, for example, our training methods and organizations with whom we work. As detailed below, we also aim to subject Risk Reports to review by credible, independent external parties.

Governance commitmentsFinally, our governance commitments are intended to promote internal and external accountability, similar to those in our previous RSP.

Our RSP is only one part of our overall approach to safety. For instance, although this policy focuses on catastrophic risks, they are not the only risks we consider important--our Usage Policy and societal impacts research address other concerns. Further, the RSP may serve some regulatory requirements, but it is not designed to be comprehensive. We want to keep it focused on our most central measures for addressing the catastrophic risks we prioritize most, rather than expand it to address every obligation we face. Where regulatory requirements exceed or differ from what the RSP covers, we will address them through separate documents.

Anthropic's Responsible Scaling Policy

Version 1.0 Effective September 19, 2023

As AI models become more capable, Anthropic believes that they will create major economic and social value, but will also present increasingly severe risks. With this document we are making a public commitment to a concrete framework for managing these risks--one that will evolve over time, but that seeks to establish clear expectations and accountability in its initial form.

We focus these commitments specifically on catastrophic risks1, defined as large-scale devastation (for example, thousands of deaths or hundreds of billions of dollars in damage) that is directly caused by an AI model and wouldn't have occurred without it. AI represents a spectrum of risks and these commitments are designed to deal with the more extreme end of this spectrum. This work is complementary to our work on other areas of AI safety, including mitigating harms like misinformation, bias, and toxicity, studying societal impacts, protecting customer privacy, building robust and reliable systems, and developing techniques like Constitutional AI for alignment with human values.

Note that these commitments primarily relate to internal testing and development practices for future more powerful versions of Claude. They do not alter current uses of Claude or any of Anthropic's present offerings (beyond safety practices we already engage in).

Our commitments are designed in the spirit of the Responsible Scaling Policy (RSP) framework being developed by Paul Christiano and ARC Evals, as well as emerging government policy proposals on responsible AI development in the UK, EU, and US. We thank ARC Evals for substantial advice and collaboration on the development of our commitments.

Risk Framework, Thresholds & Safety Measures

Framework

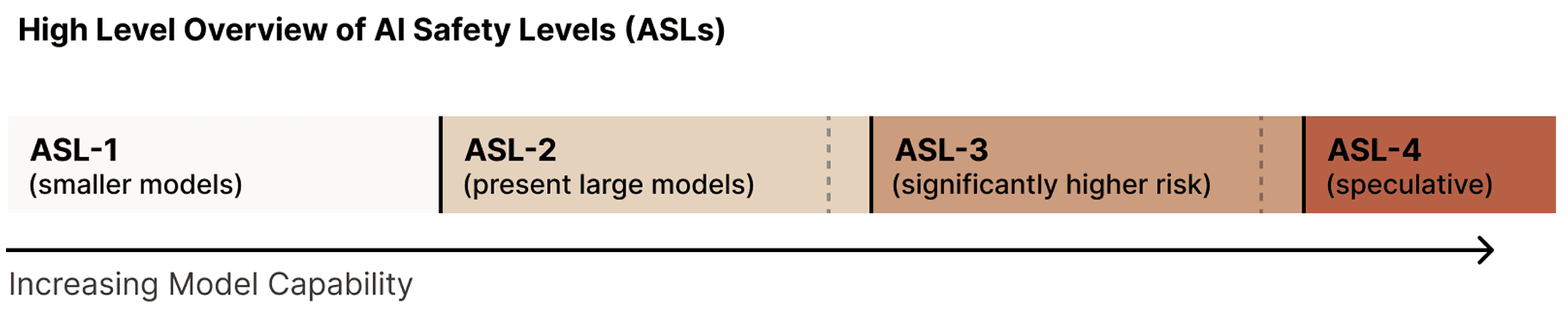

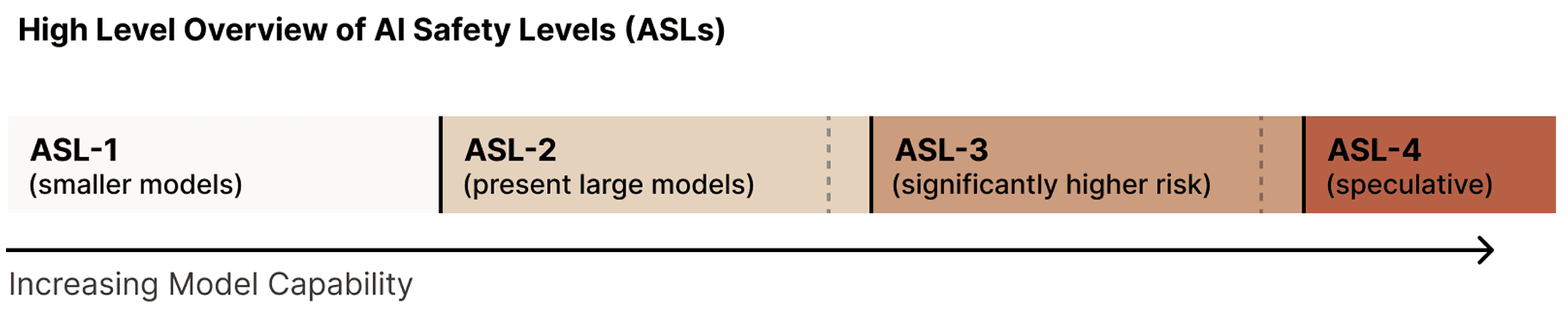

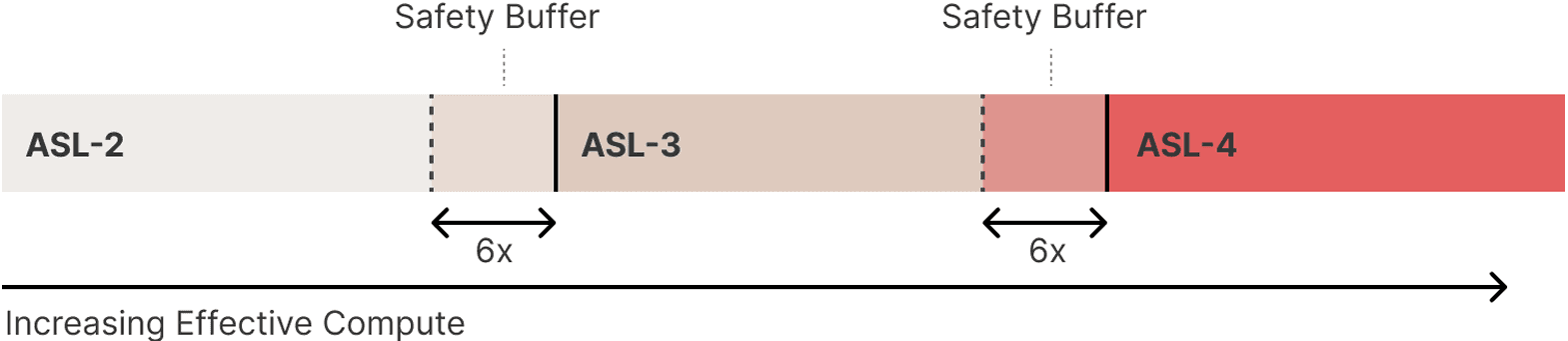

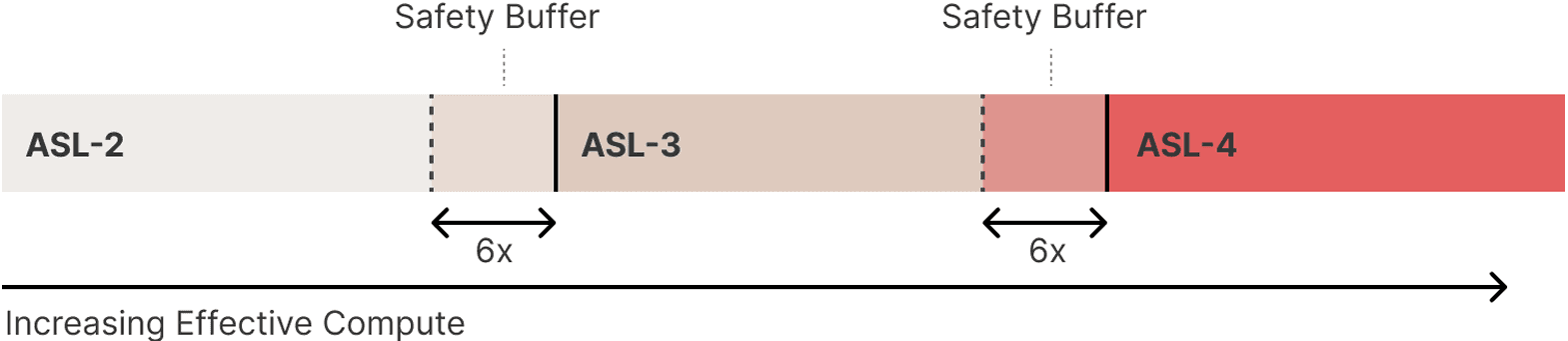

Central to our plan is the concept of AI safety levels (ASL), which are modeled loosely after the US government's biosafety level (BSL) standards for handling of dangerous biological materials. We define a series of AI capability thresholds that represent increasing potential risks, such that each ASL requires more stringent safety, security, and operational measures than the previous one. Of course, higher ASL models are also likely to be associated with increasingly powerful beneficial applications (including potentially the ability to prevent catastrophic risks), so our goal is not to prohibit development of these models, but rather to safely enable their use with appropriate precautions.

For each ASL, the framework considers two broad classes of risks:

Deployment risks: Risks that arise from active use of powerful AI models. This includes harm caused by users querying an API or other public interface, as well as misuse by internal users (compromised or malicious). Our deployment safety measures are designed to address these risks by governing when we can safely deploy a powerful AI model.

Containment risks: Risks that arise from merely possessing a powerful AI model. Examples include (1) building an AI model that, due to its general capabilities, could enable the production of weapons of mass destruction if stolen and used by a malicious actor, or (2) building a model which autonomously escapes during internal use. Our containment measures are designed to address these risks by governing when we can safely train or continue training a model.

Complying with higher ASLs is not just a procedural matter, but may sometimes require research or technical breakthroughs to give affirmative evidence of a model's safety (which is generally not possible today), demonstrated inability to elicit catastrophic risks during red-teaming (as opposed to merely a commitment to perform red-teaming), and/or unusually stringent information security controls. Anthropic's commitment to follow the ASL scheme thus implies that we commit to pause the scaling2 and/or delay the deployment of new models whenever our scaling ability outstrips our ability to comply with the safety procedures for the corresponding ASL.

One challenge with the ASL scheme as compared to BSL is that ASLs above our current capabilities represent systems that have never been built before -- in contrast to BSL, where the highest levels include specific dangerous pathogens that exist today. The ASL system thus has an unavoidable component of "building the airplane while flying it"-- we will have to start acting on many provisions of this policy before others can reasonably be specified.

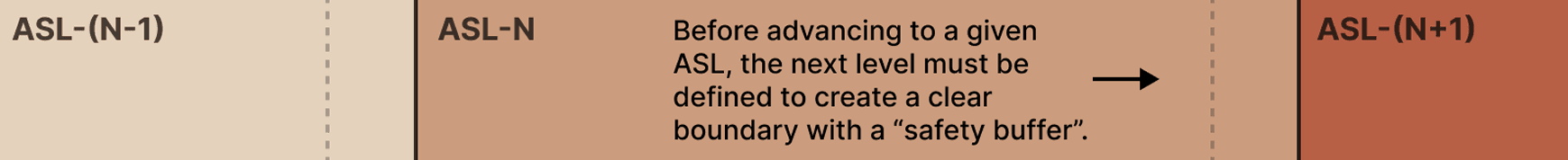

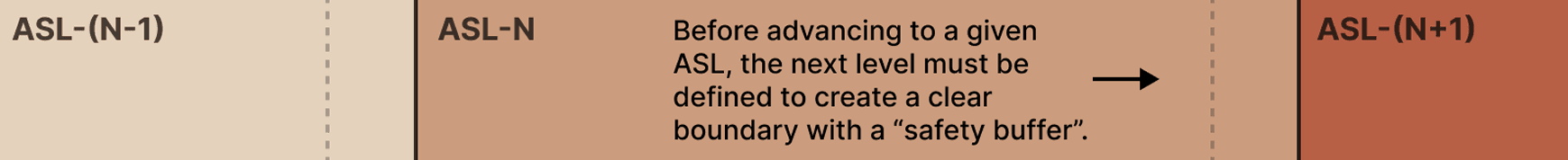

Rather than try to define all future ASLs and their safety measures now (which would almost certainly not stand the test of time), we will instead take an approach of iterative commitments. By iterative, we mean we will define ASL-2 (current system) and ASL-3 (next level of risk) now, and commit to define ASL-4 by the time we reach ASL-3, and so on.

Towards the end of this document we speculate about ASL-4+, but only to give a flavor of our current thinking and early preparation (which will likely change a lot as we get closer to ASL-4).

This document will be periodically updated as we learn more, according to an "Update Process" described below. Updates will involve both defining higher ASL levels, and making course corrections to existing levels and safety measures as we learn more. We also welcome input on this document from other groups working on AI risk assessment and safety/security measures.

Sources of Catastrophic Risk

Our current understanding suggests at least two general sources of catastrophic risk from increasingly powerful AI models. For our initial commitments, we design our evaluations and safety measures with these risks in mind:

Misuse: AI systems are dual-use technologies, and so as they become more powerful, there is an increasing risk that they will be used to intentionally cause large-scale harm, for example by helping individuals create CBRN3 or cyber threats.

Autonomy and replication: As AI systems continue to scale, they may become capable of increased autonomy that enables them to proliferate and, due to imperfections in current methods for steering such systems, potentially behave in ways contrary to the intent of their designers or users. Such systems could become a source of catastrophic risk even if no one deliberately intends to misuse them.

We are likely to revise and refine these ideas as our understanding of AI systems develops.

1. Background

AI Safety Level Standards (ASL Standards) are core to our risk mitigation strategy. An ASL Standard is a set of technical and operational measures for safely training and deploying frontier AI models. As model capabilities increase, so will the need for stronger safeguards, which are captured in successively higher ASL Standards. Definitions of ASL Standards and other key terms are available in Appendix A.

The types of measures that compose an ASL Standard currently fall into two categories -- Deployment Standards and Security Standards -- which map onto the types of risks that frontier AI models may pose.

Deployment Standards: Deployment Standards are technical, operational, and policy measures to ensure the safe usage of AI models by external users (i.e., our users and customers) as well as internal users (i.e., our employees). Deployment Standards aim to strike a balance between enabling beneficial use of AI technologies and mitigating the risks of potentially catastrophic cases of misuse.

Security Standards: Security Standards are technical, operational, and policy measures to protect AI models -- particularly their weights and associated systems -- from unauthorized access, theft, or compromise by malicious actors. Security Standards are intended to maintain the integrity and controlled use of AI models throughout their lifecycle, from development to deployment.

We expect to continue refining our framework in response to future risks (for example, the risk that an AI system attempts to subvert the goals of its operators).

At present, all of our models must meet the ASL-2 Deployment and Security Standards. The ASL-2 Security and Deployment Standards provide a baseline level of safe deployment and model security for AI models. These standards, which are summarized below, are available in full in Appendix B.

The ASL-2 Deployment Standard reduces the prevalence of misuse, and includes the publication of model cards and enforcement of Usage Policy; harmlessness training such as Constitutional AI and automated detection mechanisms; and establishing vulnerability reporting channels as well as a bug bounty for universal jailbreaks.

The ASL-2 Security Standard requires a security system that can likely thwart most opportunistic attackers and includes vendor and supplier security reviews, physical security measures, and the use of secure-by-design principles.

Although the ASL-2 Standard is appropriate for all of our current models, that may not hold true in the future as our models become more capable. To determine when a model has become sufficiently advanced such that its deployment and security measures should be strengthened, we use the concepts of Capability Thresholds and Required Safeguards.

A Capability Threshold tells us when we need to upgrade our protections, and the corresponding Required Safeguards tell us what standard should apply. A Capability Threshold is a prespecified level of AI capability that, if reached, signals (1) a meaningful increase in the level of risk if the model remains under the existing set of safeguards and (2) a corresponding need to upgrade the safeguards to a higher ASL Standard. In other words, a Capability Threshold serves as a trigger for shifting from an ASL-N Standard to an ASL-N+1 Standard (or, in some cases, moving straight to ASL N+2 or higher). Depending on the Capability Threshold, it may not be necessary to upgrade both the Deployment and Security Standards; each Capability Threshold corresponds to specific Required Safeguards that identify which of the ASL Standards must be met.

1. Our Recommendations for Industry-Wide Safety

This section outlines our recommendations for what it would take, at an industry-wide level, to keep catastrophic risks reliably low through a period of rapid advances in AI capabilities. We lay this out in a three-column table. The left column identifies capability thresholds that would call for heightened mitigations. The middle column provides an overview of our planned mitigations, which we have set forth more fully in our Frontier Safety Roadmap and other sections of this policy. The right column describes our recommendations for industry-wide safety at each threshold.

The distinction between our plans as a company (middle column) and our industry-wide recommendations (right column) reflects the limitations of any single AI developer's ability to ensure safety across the industry. In particular, we cannot unilaterally and unconditionally commit to staying in line with the industry-wide recommendations in the right column. However, these recommendations will drive important aspects of our work:

We use these recommendations as the north star for our risk mitigations planning as well as our public policy work. We will strive to advance these recommendations through a mixture of example-setting, addressing unsolved technical problems, advocacy through industry groups, and policy advocacy.

We have also adopted a set of competitor-contingent commitments (see Appendix A) aimed at staying in line with these recommendations in scenarios where we can be confident that other relevant AI developers are doing the same.

At this point in AI's rapid development, we cannot presently give highly specific advance detail on what evaluations will determine whether risk thresholds have been passed, or what risk mitigations will be needed to achieve safety. Our recommendations for industry-wide safety are thus structured around requiring analysis and arguments making a strong case for safety, rather than AI Safety Levels (more in Appendix B). This leaves flexibility in how risk thresholds are evaluated and how safety is achieved and argued for. But it creates a challenge: one actor's view of what constitutes good risk assessment and mitigation may be very different from another's.

Ultimately, the best way for these recommendations to be implemented is likely via governance of all relevant frontier AI developers by third parties that determine which developers need to provide risk analyses and make arguments for the safety of their systems, and determine which such arguments are adequate. To the extent this takes the form of national regulation, different countries should attempt to harmonize their governance, including standards of evidence, to avoid a race to the bottom. In the shorter run, independent bodies (standards-setting organizations, auditors, etc.) might review such arguments and enforce high quality for private AI developers via voluntary mechanisms.

We expect that the recommendations for industry-wide safety will evolve significantly, as we learn more about AI capabilities, threat models, and risk mitigations. We hope these recommendations will become increasingly specific over time.

Framework

Central to our plan is the concept of AI safety levels (ASL), which are modeled loosely after the US government's biosafety level (BSL) standards for handling of dangerous biological materials. We define a series of AI capability thresholds that represent increasing potential risks, such that each ASL requires more stringent safety, security, and operational measures than the previous one. Of course, higher ASL models are also likely to be associated with increasingly powerful beneficial applications (including potentially the ability to prevent catastrophic risks), so our goal is not to prohibit development of these models, but rather to safely enable their use with appropriate precautions.

For each ASL, the framework considers two broad classes of risks:

Deployment risks: Risks that arise from active use of powerful AI models. This includes harm caused by users querying an API or other public interface, as well as misuse by internal users (compromised or malicious). Our deployment safety measures are designed to address these risks by governing when we can safely deploy a powerful AI model.

Containment risks: Risks that arise from merely possessing a powerful AI model. Examples include (1) building an AI model that, due to its general capabilities, could enable the production of weapons of mass destruction if stolen and used by a malicious actor, or (2) building a model which autonomously escapes during internal use. Our containment measures are designed to address these risks by governing when we can safely train or continue training a model.

Complying with higher ASLs is not just a procedural matter, but may sometimes require research or technical breakthroughs to give affirmative evidence of a model's safety (which is generally not possible today), demonstrated inability to elicit catastrophic risks during red-teaming (as opposed to merely a commitment to perform red-teaming), and/or unusually stringent information security controls. Anthropic's commitment to follow the ASL scheme thus implies that we commit to pause the scaling2 and/or delay the deployment of new models whenever our scaling ability outstrips our ability to comply with the safety procedures for the corresponding ASL.

One challenge with the ASL scheme as compared to BSL is that ASLs above our current capabilities represent systems that have never been built before -- in contrast to BSL, where the highest levels include specific dangerous pathogens that exist today. The ASL system thus has an unavoidable component of "building the airplane while flying it"-- we will have to start acting on many provisions of this policy before others can reasonably be specified.

Rather than try to define all future ASLs and their safety measures now (which would almost certainly not stand the test of time), we will instead take an approach of iterative commitments. By iterative, we mean we will define ASL-2 (current system) and ASL-3 (next level of risk) now, and commit to define ASL-4 by the time we reach ASL-3, and so on.

Towards the end of this document we speculate about ASL-4+, but only to give a flavor of our current thinking and early preparation (which will likely change a lot as we get closer to ASL-4).

This document will be periodically updated as we learn more, according to an "Update Process" described below. Updates will involve both defining higher ASL levels, and making course corrections to existing levels and safety measures as we learn more. We also welcome input on this document from other groups working on AI risk assessment and safety/security measures.

Sources of Catastrophic Risk

Our current understanding suggests at least two general sources of catastrophic risk from increasingly powerful AI models. For our initial commitments, we design our evaluations and safety measures with these risks in mind:

Misuse: AI systems are dual-use technologies, and so as they become more powerful, there is an increasing risk that they will be used to intentionally cause large-scale harm, for example by helping individuals create CBRN3 or cyber threats.

Autonomy and replication: As AI systems continue to scale, they may become capable of increased autonomy that enables them to proliferate and, due to imperfections in current methods for steering such systems, potentially behave in ways contrary to the intent of their designers or users. Such systems could become a source of catastrophic risk even if no one deliberately intends to misuse them.

We are likely to revise and refine these ideas as our understanding of AI systems develops.

Initial Commitments

Our initial responsible scaling commitments consist of the following elements, which are visualized below and expanded on in the rest of this document.

1. ASL-2: The security and safety measures we commit to take with current state-of-the-art models, many of which we have previously committed to.

2. ASL-3: A set of dangerous capabilities we think could arise in near-future models, along with the Containment Measures we commit to implement before training such a model, and the Deployment Measures we commit to take before deploying it.

3. ASL-4 iterative commitment: We commit to define ASL-4 evaluations before we first train ASL-3 models (i.e. before continuing training beyond when ASL-3 evaluations are triggered). Similarly, we commit to define ASL-5 evaluations before training ASL-4 models, and so forth.

4. Evaluation protocol: A protocol for when and how to evaluate models for dangerous capabilities, ensuring we detect warning signs before models require higher ASL safety measures. We commit to pause training before a model's capability level outstrips the Containment Measures we have implemented.

5. Procedural commitments: A set of transparency and procedural measures to ensure verifiable compliance with the commitments in the previous bullet points. Notably, we commit to a formal process for modifying the current safety levels in response to new information, and defining future levels.

The scheme above is designed to ensure that we will always have a set of safety guardrails that govern training and deployment of our next model, without having to define all ASLs at the outset. Near the bottom of this document, we do provide a guess about higher ASLs, but we emphasize that these are so speculative that they are likely to bear little resemblance to the final version. Our hope is that the broad ASL framework can scale to extremely powerful AI, even though the actual content of the higher ASLs will need to be developed over time.

AI Safety Levels Framework

A brief visualization of the AI Safety Levels framework. All safety measures are cumulative above the previous level.

As can be seen in the table, our most significant immediate commitments include a high standard of security for ASL-3 containment, and a commitment not to deploy ASL-3 models until thorough red-teaming finds no risk of catastrophe. We expect these to be difficult, binding constraints that may become relevant in the next year or two, requiring substantial effort, investment, and planning to meet.

ASL-2 Commitments

ASL-2 Capabilities and Threat ModelsWe define ASL-24 as models that do not yet pose a risk of catastrophe, but do exhibit early signs of the necessary capabilities required for catastrophic harms. For example, ASL-2 models may (in absence of safeguards) (a) provide information related to catastrophic misuse, but not in a way that significantly elevates risk compared to existing sources of knowledge such as search engines5, or (b) provide information about catastrophic misuse cases that cannot be easily found in another way, but is inconsistent or unreliable enough to not yet present a significantly elevated risk of actual harm.

Informed by our work on frontier red teaming, our current estimate is that Claude 2 and similar frontier models exhibit (a) and sometimes exhibit (b), but do not appear (yet) to present significant actual risks of catastrophe through misuse or autonomous self-replication. Thus, we classify Claude 2 as ASL-2, and we believe the same is likely true of other frontier LLMs that exist today. It is unclear how much scale-up would be required to present a significant risk of catastrophe, but these results suggest a real risk that the next generation of models could qualify. For this reason, we commit to periodic evaluations of our future models for ASL-3 warning signs.

ASL-2 Containment MeasuresWe do not believe that merely possessing today's models poses significant risk of catastrophe; however, in keeping with our commitments earlier this year, we will treat AI model weights as core intellectual property with regards to cybersecurity and insider threat risks. You can read more about our concrete security commitments in the appendix, which include limiting access to model weights to those whose job function requires it, establishing a robust insider threat detection program, and storing and working with the weights in an appropriately secure environment to reduce the risk of unsanctioned release. More broadly, we plan to use future ASLs in part to guide and focus our safety and security investments.

Additionally, we commit to periodically evaluating for ASL-3 warning signs (described in the Evaluation Protocol below).

ASL-2 Deployment MeasuresWhile ASL-2 models do not carry significant risk of causing a catastrophe, their deployment still poses a range of trust and safety, legal, and ethical risks. To address these risks, our ASL-2 deployment commitments include:

Model cards: Publish model cards for significant new models describing capabilities, limitations, evaluations, and intended use cases. The most recent model card for Claude 2 is available here.

Acceptable use: Maintain and enforce an acceptable use policy (AUP) that restricts, at a minimum, catastrophic and high harm use cases, including using the model to generate content that could cause severe risks to the continued existence of humankind, or direct and severe harm to individuals. See our current AUP here which briefly describes our enforcement measures, which include maintaining the option to restrict access if extreme misuse issues emerge.

Vulnerability reporting: Provide clearly indicated paths for our consumer and API products where users can report harmful or dangerous model outputs or use cases. Users of claude.ai can report issues directly in the product, and API users can report issues to usersafety@anthropic.com.

Harm refusal techniques: Train models to refuse requests to aid in causing harm, such as with Constitutional AI or other improved techniques.

T&S tooling: Require model enhanced trust and safety detection and enforcement. Claude.ai, our native API, and our distribution partners currently use a classifier model to identify harmful user prompts and model completions6. If automated fine-tuning is provided, data should similarly be filtered for harmfulness, and models should be subject to automated evaluation to ensure harmlessness features are not degraded.

Our ASL-2 deployment measures overlap substantially with the White House voluntary commitments that we and other companies made in July, which we also continue to maintain.

ASL-3 Commitments

ASL-3 Capabilities and Threat ModelsWe define an ASL-3 model as one that can either immediately, or with additional post-training techniques corresponding to less than 1% of the total training cost, do at least one of the following two things. (By post-training techniques we mean the best capabilities elicitation techniques we are aware of at the time, including but not limited to fine-tuning, scaffolding, tool use, and prompt engineering.)

1. Capabilities that significantly increase risk of misuse catastrophe: Access to the model would substantially increase the risk of deliberately-caused catastrophic harm, either by proliferating capabilities, lowering costs, or enabling new methods of attack. This increase in risk is measured relative to today's baseline level of risk that comes from e.g. access to search engines and textbooks. We expect that AI systems would first elevate this risk from use by non-state attackers7.

Our first area of effort is in evaluating bioweapons risks where we will determine threat models and capabilities in consultation with a number of world-class biosecurity experts. We are now developing evaluations for these risks in collaboration with external experts to meet ASL-3 commitments, which will be a more systematized version of our recent work on frontier red-teaming. In the near future, we anticipate working with CBRN, cyber, and related experts to develop threat models and evaluations in those areas before they present substantial risks. However, we acknowledge that these evaluations are fundamentally difficult, and there remain disagreements about threat models.

2. Autonomous replication in the lab: The model shows early signs of autonomous self-replication ability, as defined by 50% aggregate success rate on the tasks listed in [Appendix on Autonomy Evaluations]. The appendix includes an overview of our threat model for autonomous capabilities and a list of the basic capabilities necessary for accumulation of resources and surviving in the real world, along with conditions under which we would judge the model to have succeeded. Note that the referenced appendix describes the ability to act autonomously specifically in the absence of any human intervention to stop the model, which limits the risk significantly. Our evaluations were developed in consultation with Paul Christiano and ARC Evals, which specializes in evaluations of autonomous replication.

Note that because safeguards such as Reinforcement Learning from Human Feedback (RLHF) or constitutional training can almost certainly be fine-tuned away within the specified 1% of training cost, and also because the ASL-3 standard applies if the model is dangerous at any stage in its training (for example after pretraining but before RLHF), fine-tuning-based safeguards are likely irrelevant to whether a model qualifies as ASL-3. To account for the possibility of model theft and subsequent fine-tuning, ASL-3 is intended to characterize the model's underlying knowledge and abilities, not whether or not its safety features prevent it from cooperating in actually outputting dangerous content (safety features however will be very important in the deployment measures for ASL-3 models).

ASL-3 Containment MeasuresA model in the ASL-3 category does not itself present a threat of containment breach due to autonomous self-replication, because it is both unlikely to be able to persist in the real world, and unlikely to overcome even simple security measures intended to prevent it from stealing its own weights. However, if the model is stolen and deployed by a malicious or careless actor, there is still (1) a significant risk of catastrophe via weaponized misuse, and (2) a small risk that the model could in fact survive and spread after new developments in post-training improvements, due to the difficulty of estimating how significant such improvements might be in the future.

Due to the importance of preventing the model weights from being stolen by such a threat actor, the containment measures we commit to implementing prior to training ASL-3 models primarily concern security:

Model weight and code security: We commit to ensuring that ASL-3 models are stored in such a manner to minimize risk of theft by a malicious actor that might use the model to cause a catastrophe. Specifically, we will implement measures designed to harden our security so that non-state attackers are unlikely to be able to steal model weights, and advanced threat actors (e.g. states) cannot steal them without significant expense. The full set of security measures that we commit to (and have already started implementing) are described in this appendix, and were developed in consultation with the authors of a forthcoming RAND report on securing AI weights.

Internal compartmentalization: We will limit access to training techniques and model hyperparameters to a need-to-know basis, in order to avoid proliferation of dangerous AI models and the empowerment of bad actors. Some initial practices such as proprietary data classification have already been implemented, though full compartmentalization of code access remains a goal prior to ASL-3.

Define and evaluate for ASL-4 warning signs: Before we first train ASL-3 models (i.e. before continuing training beyond when ASL-3 evaluations are triggered), we will develop a definition of ASL-4 capabilities along with warning sign evaluation protocols. As with ASL-3, detecting ASL-4 warning signs before corresponding safety/security measures are in place would necessitate pausing training. We anticipate that an accurate evaluation protocol for ASL-4 may be challenging to develop (for example, a misaligned ASL-4 model could display sufficient awareness to conceal its capabilities under certain testing regimes, and it could also prove hard to predict the capabilities of a large collection of models working together).

ASL-3 Deployment MeasuresWe commit to an additional set of measures for producing ASL-3 model outputs (externally or internally) as compared to merely training or possessing ASL-3 models.

Successfully pass red-teaming: World-class experts collaborating with prompt engineers should red-team the deployment thoroughly and fail to elicit information at a level of sophistication, accuracy, usefulness, detail, and frequency which significantly enables catastrophic misuse. Misuse domains should at a minimum include causes of extreme CBRN risks, and cybersecurity.

Note that in contrast to the ASL-3 capability threshold, this red-teaming is about whether the model can cause harm under realistic circumstances (i.e. with harmlessness training and misuse detection in place), not just whether it has the internal knowledge that would enable it in principle to do so.

We will refine this methodology, but we expect it to require at least many dozens of hours of deliberate red-teaming per topic area, by world class experts specifically focused on these threats (rather than students or people with general expertise in a broad domain). Additionally, this may involve controlled experiments, where people with similar levels of expertise to real threat actors are divided into groups with and without model access, and we measure the delta of success between them.

Red-team all deployed modalities: If deployment includes e.g. a fine-tuning API, release of weights, or another modality that offers a broader surface area of model modification, red-teaming must include this use of these modalities and must still return a result of no practically important catastrophic misuse. (Note that due to the potential harms presented by ASL-3 models and the possible ease of removing safeguards via fine-tuning, it may be difficult or impossible for these red-teaming tests to pass if weights are released or if unmoderated fine-tuning access is provided).

Automated detection: As a "defense in depth" addition to harm refusal techniques, classifiers or similar technologies should be deployed to detect and minimize risk of attempts at catastrophic misuse at the user or customer level. We commit to proactively address and mitigate these misuse threats, including working with appropriate law enforcement or national security authorities in the most serious cases. ASL-3+ model inputs and outputs should be retained for at least 30 days (by the customer as applicable, e.g. in their cloud account) to assist in the event of an emergency. We remain committed to data privacy, and will also explore the possibility of exceptions for some low-risk use-cases where safety can be guaranteed. This commitment applies only to ASL-3 models, and therefore does not include our current Claude 2 model or represent a change for our current products.

Internal usage controls: Automated detection as described above will also apply for internal usage within Anthropic or its distribution partners. For internal usage (e.g. red-teaming, RL training, or other R&D activities), generated outputs and corresponding inputs are also logged and retained for at least 30 days for all internal use, including when used for training. These logs are monitored for abnormal activity, including harmful use or unexpected attempts by AI systems to take real-world actions. Alarms are treated seriously and responded to promptly.

Tiered access: In limited cases, models with capabilities relevant to catastrophic harm may be made available to a select group of vetted users with a legitimate and beneficial use-case that cannot be separated from dangerous capabilities, and only if such access can be granted safely and with sufficient oversight. For example, potentially harmful biology capabilities that could be used for cancer research might be made available to a small group of vetted researchers at organizations that commit to strong, well defined, and thoroughly vetted security and internal controls.

Vulnerability and incident disclosure: Engage in a vulnerability and incident disclosure process with other labs (subject to security or legal constraints) that covers red-teaming results, national security threats, and autonomous replication threats.

Rapid response to model vulnerabilities: When informed of a newly discovered model vulnerability enabling catastrophic harm (e.g. a jailbreak or a detection failure), we commit to mitigate or patch it promptly (e.g. 50% of the time in which catastrophic harm could realistically occur). As part of this, Anthropic will maintain a publicly available channel for privately reporting model vulnerabilities.

2. Capability Thresholds and Required Safeguards

Below, we specify the Capability Thresholds and their corresponding Required Safeguards. The Required Safeguards for each Capability Threshold are intended to mitigate risk from a model with such capabilities to acceptable levels. In developing these standards, we have weighed the risks and benefits of frontier model development. We believe these safeguards are achievable with sufficient investment and advance planning into research and development and would advocate for the industry as a whole to adopt them. We will conduct assessments to inform when to implement the Required Safeguards (see Section 4). The Capability Thresholds summarized below are available in full in Appendix C.

CBRN-3: The ability to significantly help individuals or groups with basic technical backgrounds (e.g., undergraduate STEM degrees) create/obtain and deploy CBRN weapons.

This capability could greatly increase the number of actors who could cause this sort of damage, and there is no clear reason to expect an offsetting improvement in defensive capabilities. The ASL-3 Deployment Standard and the ASL-3 Security Standard, which protect against misuse and model-weight theft by non-state adversaries, are required.

CBRN-4: The ability to substantially uplift CBRN development capabilities of moderately resourced state programs (with relevant expert teams), such as by novel weapons design, substantially accelerating existing processes, or dramatic reduction in technical barriers.

We expect this threshold will require the ASL-4 Deployment and Security Standards. We plan to add more information about what those entail in a future update.

AI R&D-4: The ability to fully automate the work of an entry-level, remote-only Researcher at Anthropic.

The ASL-3 Security Standard is required. In addition, we will develop an affirmative case that (1) identifies the most immediate and relevant risks from models pursuing misaligned goals and (2) explains how we have mitigated these risks to acceptable levels. The affirmative case will describe, as relevant, evidence on model capabilities; evidence on AI alignment; mitigations (such as monitoring and other safeguards); and our overall reasoning.

AI R&D-5: The ability to cause dramatic acceleration in the rate of effective scaling.

At minimum, the ASL-4 Security Standard (which would protect against model-weight theft by state-level adversaries) is required, although we expect a higher security standard may be required. As with AI R&D-4, we also expect an affirmative case will be required.

These Capability Thresholds represent our current understanding of the most pressing catastrophic risks. As our understanding evolves, we may identify additional thresholds. For each threshold, we will identify and describe the corresponding Required Safeguards as soon as feasible, and at minimum before training or deploying any model that reaches that threshold.

We will consider it sufficient to rule out the possibility that a model has surpassed the two Autonomous AI R&D Capability Thresholds by considering an earlier (i.e., less capable) checkpoint: the ability to autonomously perform a wide range of 2-8 hour software engineering tasks. We would view this level of capability as an important checkpoint towards both Autonomous AI R&D as well as other capabilities that may warrant similar attention (for example, autonomous replication). We will test for this checkpoint and, by the time we reach it, we will (1) aim to have met (or be close to meeting) the ASL-3 Security Standard as an intermediate goal; (2) share an update on our progress around that time; and (3) begin testing for the full Autonomous AI R&D Capability Threshold and any additional risks.

We will also maintain a list of capabilities that we think require significant investigation and may require stronger safeguards than ASL-2 provides. This group of capabilities could pose serious risks, but the exact Capability Threshold and the Required Safeguards are not clear at present. These capabilities may warrant a higher standard of safeguards, such as the ASL-3 Security or Deployment Standard. However, it is also possible that by the time these capabilities are reached, there will be evidence that such a standard is not necessary (for example, because of the potential use of similar capabilities for defensive purposes). Instead of prespecifying particular thresholds and safeguards today, we will conduct ongoing assessments of the risks with the goal of determining in a future iteration of this policy what the Capability Thresholds and Required Safeguards would be.

At present, we have identified one such capability:

Cyber OperationsCyber Operations: The ability to significantly enhance or automate sophisticated destructive cyber attacks, including but not limited to discovering novel zero-day exploit chains, developing complex malware, or orchestrating extensive hard-to-detect network intrusions. Ongoing Assessment: This will involve engaging with experts in cyber operations to assess the potential for frontier models to both enhance and mitigate cyber threats, and considering the implementation of tiered access controls or phased deployments for models with advanced cyber capabilities. We will conduct either pre- or post-deployment testing, including specialized evaluations. We will document any salient results alongside our Capability Reports (see Section 3).

Overall, our decision to prioritize the capabilities in the two tables above is based on commissioned research reports, discussions with domain experts, input from expert forecasters, public research, conversations with other industry actors through the Frontier Model Forum, and internal discussions. As the field evolves and our understanding deepens, we remain committed to refining our approach.

1. Our Recommendations for Industry-Wide Safety

Non-novel chemical/biological weapons production. AI systems with the ability to significantly help individuals or groups with basic technical backgrounds (e.g., undergraduate STEM degrees) create/obtain and deploy chemical and/or biological weapons with serious potential for catastrophic damages.

We will maintain or improve on our ASL-3 protections, which include classifier guards at least as robust as our initial Constitutional Classifiers; access controls for trusted users with exemptions to classifier guards; red-teaming, bug bounties, and threat intelligence for continually assessing the threat of jailbreaks; and a number of noteworthy security controls. Specifics may change, but we will maintain equally or more robust measures over time and will publish updates in our Risk Reports. We expect to continuously meet the criteria in the right column, although we cannot make guarantees about an evolving landscape with continually adaptive attackers.

A frontier developer should make a strong argument that individual users and relatively small teams will not become significantly more likely to cause catastrophic harm via their usage of product surfaces or via theft of model weights. This will likely require: - Restrictions on model behavior, and/or measures for quickly detecting and acting on Usage Policy violations, accompanied by a strong case that these measures are difficult to reliably, sustainably circumvent via jailbreaking. - Precautions against opportunistic theft of model weights, such as centralized controls on third-party applications and software updates.

Novel chemical/biological weapons production. AI systems with the ability to significantly help threat actors (for example, moderately resourced expert-backed teams) create/obtain and deploy chemical and/or biological weapons with potential for catastrophic damages far beyond those of past catastrophes such as COVID-19.

We will apply protections at least as strong as our ASL-3 protections (see previous row) to an expanded set of potential use cases for AI, covering the most likely vectors for this threat. Additionally, we will identify the most concerning specific threat pathways, create policy recommendations for early detection and response for such threats, and share this content with policymakers.

A frontier developer should make a strong argument that threat actors will not become significantly more likely to cause the sort of catastrophic harm discussed in the lefthand column via their usage of product surfaces or via theft of model weights. This will likely require similar measures to those from the previous row, but to a higher standard--to the point where even well-resourced and -staffed threat actors would be unlikely to reliably jailbreak models or cause catastrophic harm via unauthorized access to or modification of models (including via stolen or modified model weights). This would likely mean security roughly in line with RAND SL4, but it depends on the capabilities of the strongest and most plausible threat actors that are not bound by a credible governance regime enforcing the recommendations for industry-wide safety outlined here.

High-stakes sabotage opportunities. AI systems that are highly relied on and have extensive access to sensitive assets as well as moderate capacity for autonomous, goal-directed operation and subterfuge--such that it is plausible these AI systems could (if directed toward this goal, either deliberately or inadvertently) carry out sabotage leading to irreversibly and substantially higher odds of a later global catastrophe. In the near term, this possibility will likely be most applicable to AI systems that are extensively used within major AI companies, with the opportunity to manipulate how their successor systems are trained and deployed as well as the evidence used to assess their safety. Down the line, this possibility may come to apply to AI systems deployed within government and other high-stakes settings.

We will detail the state of our AI systems' capabilities and propensities, our monitoring practices, and the overall level of risk in our Risk Reports. We expect to continually be able to meet the criteria in the right column, although we cannot make guarantees about an evolving technology that may increasingly have the ability to detect and manipulate testing.

A frontier developer should make a strong argument that AI systems will not carry out sabotage leading to irreversibly and substantially higher odds of a later global catastrophe. This case may initially be relatively simple and rely heavily on capability limitations, if it is first required when the risk is merely plausible. As risk becomes harder to rule out, this case will likely include some combination of: - Internal compartmentalization, restriction, and code review to prevent excessive sabotage opportunities for AI models. - Capability assessments demonstrating that AI models lack the ability to carry out irreversible (which would generally mean unnoticed) sabotage. - Monitoring and/or restricting AI behavior and usage internally. - Evidence that AI models lack the propensity to deceive, manipulate, or sabotage users.

Automated R&D in key domains. AI systems that can fully automate, or otherwise dramatically accelerate, the work of large, top-tier teams of human researchers in domains where fast progress could cause threats to international security and/or rapid disruptions to the global balance of power--for example, energy, robotics, weapons development and AI itself. For now, our evaluations will focus specifically on AI R&D, as this domain likely plays to AI systems' current strengths and is more tractable to assess than capabilities in other domains. Additionally, AI R&D alone could cause acceleration in AI capabilities improvements, to the point where all of the threats listed above (and more) develop very quickly. Our working operationalization is to trigger this risk threshold at the point where we determine that a model could compress two years of 2018 -- 2024 AI progress into a single year. This capability threshold is intended to reflect our definition of highly capable models (see Section 3.6). It may be sensible to add earlier, and/or easier-to-measure, thresholds that trigger less demanding versions of the mitigations for this threshold.

We will: - Resource and complete significant "moonshot R&D for security" projects, to explore ambitious and possibly unconventional ways to achieve unprecedented levels of security against the world's best-resourced attackers. - Achieve an "eyes on everything" state for our internal AI development. We will comprehensively gather, centralize, and maintain logs for all critical AI-development activities, and use AI to analyze them for issues including security threats, concerning behavior by insiders (humans as well as AI systems themselves), and training processes or data that are out of line with the public Constitution that shapes and defines our AI models. - Perform systematic alignment assessments to examine Claude's behavioral patterns and propensities, meaningfully incorporating mechanistic interpretability and adversarial red-teaming to test our auditing methods. - Develop our internal red-teaming of our deployment safeguards to the point where our internal red-teaming performs better at finding potential jailbreaks than the collective abilities of the participants in our established bug bounty programs. - Publish Risk Reports with the status of, and noteworthy findings from, all of the above, subject to external review by at least one expert, experienced, credible, candid and disinterested external party. We broadly expect to lead the industry in practices that reduce the risks from AI, although we cannot unilaterally make guarantees about the safety level of AI this advanced.

A frontier developer should make a strong argument that: - No user or team of users (including those backed by top-tier states) will become significantly more likely to cause catastrophic harm via their usage of product surfaces or via theft of model weights. This will likely require similar measures to those listed in row 1, but to a higher standard, to the point where even well-resourced and -staffed threat actors would be unlikely to reliably jailbreak models or cause catastrophic harm via unauthorized access to or modification of models (including via stolen or modified model weights). Accomplishing this would likely mean security roughly in line with RAND SL4. Security requirements would be calibrated to defend against the strongest plausible threat actors who are not bound by a credible industry-wide safety regime. Actors subject to such a regime would not need to be treated as threats to each other's model weights. - Even malicious employees and other insiders with maximal levels of access will not be significantly enabled to cause catastrophic harm. This requires (among other things) accounting for internal tools that are less restricted than product surfaces, and for the possibility of unauthorized modification of models. This will likely require an internal Usage Policy and strong internal compartmentalization, controls and/or monitoring to restrict the ability of employees and contractors (up to and including the company's CEO as well as its most privileged technical employees) to circumvent the Usage Policy. - AI models have not been deliberately or inadvertently trained with dangerous goals, or are otherwise unlikely to autonomously cause catastrophic harm. This will likely require similar measures to those listed above under "High-stakes sabotage opportunities" (some combination of internal compartmentalization, restriction and code review; monitoring AI behavior; and evidence that AI models lack the propensity to deceive and manipulate users), but to a greater degree. In particular, at this point AI systems might be responsible for much of the research and analysis that underpins risk assessment, and might have strong capabilities for deception, manipulation and obfuscation of evidence, in which case analyses of threats from AIs should follow very high evidentiary standards with thorough and careful analysis of the possibility that much of the key evidence is suspect due to the possibility of manipulation by AI systems.

Initial Commitments

Our initial responsible scaling commitments consist of the following elements, which are visualized below and expanded on in the rest of this document.

1. ASL-2: The security and safety measures we commit to take with current state-of-the-art models, many of which we have previously committed to.

2. ASL-3: A set of dangerous capabilities we think could arise in near-future models, along with the Containment Measures we commit to implement before training such a model, and the Deployment Measures we commit to take before deploying it.

3. ASL-4 iterative commitment: We commit to define ASL-4 evaluations before we first train ASL-3 models (i.e. before continuing training beyond when ASL-3 evaluations are triggered). Similarly, we commit to define ASL-5 evaluations before training ASL-4 models, and so forth.

4. Evaluation protocol: A protocol for when and how to evaluate models for dangerous capabilities, ensuring we detect warning signs before models require higher ASL safety measures. We commit to pause training before a model's capability level outstrips the Containment Measures we have implemented.

5. Procedural commitments: A set of transparency and procedural measures to ensure verifiable compliance with the commitments in the previous bullet points. Notably, we commit to a formal process for modifying the current safety levels in response to new information, and defining future levels.

The scheme above is designed to ensure that we will always have a set of safety guardrails that govern training and deployment of our next model, without having to define all ASLs at the outset. Near the bottom of this document, we do provide a guess about higher ASLs, but we emphasize that these are so speculative that they are likely to bear little resemblance to the final version. Our hope is that the broad ASL framework can scale to extremely powerful AI, even though the actual content of the higher ASLs will need to be developed over time.

AI Safety Levels Framework

A brief visualization of the AI Safety Levels framework. All safety measures are cumulative above the previous level.

As can be seen in the table, our most significant immediate commitments include a high standard of security for ASL-3 containment, and a commitment not to deploy ASL-3 models until thorough red-teaming finds no risk of catastrophe. We expect these to be difficult, binding constraints that may become relevant in the next year or two, requiring substantial effort, investment, and planning to meet.

ASL-2 Commitments

ASL-2 Capabilities and Threat ModelsWe define ASL-24 as models that do not yet pose a risk of catastrophe, but do exhibit early signs of the necessary capabilities required for catastrophic harms. For example, ASL-2 models may (in absence of safeguards) (a) provide information related to catastrophic misuse, but not in a way that significantly elevates risk compared to existing sources of knowledge such as search engines5, or (b) provide information about catastrophic misuse cases that cannot be easily found in another way, but is inconsistent or unreliable enough to not yet present a significantly elevated risk of actual harm.

Informed by our work on frontier red teaming, our current estimate is that Claude 2 and similar frontier models exhibit (a) and sometimes exhibit (b), but do not appear (yet) to present significant actual risks of catastrophe through misuse or autonomous self-replication. Thus, we classify Claude 2 as ASL-2, and we believe the same is likely true of other frontier LLMs that exist today. It is unclear how much scale-up would be required to present a significant risk of catastrophe, but these results suggest a real risk that the next generation of models could qualify. For this reason, we commit to periodic evaluations of our future models for ASL-3 warning signs.

ASL-2 Containment MeasuresWe do not believe that merely possessing today's models poses significant risk of catastrophe; however, in keeping with our commitments earlier this year, we will treat AI model weights as core intellectual property with regards to cybersecurity and insider threat risks. You can read more about our concrete security commitments in the appendix, which include limiting access to model weights to those whose job function requires it, establishing a robust insider threat detection program, and storing and working with the weights in an appropriately secure environment to reduce the risk of unsanctioned release. More broadly, we plan to use future ASLs in part to guide and focus our safety and security investments.

Additionally, we commit to periodically evaluating for ASL-3 warning signs (described in the Evaluation Protocol below).

ASL-2 Deployment MeasuresWhile ASL-2 models do not carry significant risk of causing a catastrophe, their deployment still poses a range of trust and safety, legal, and ethical risks. To address these risks, our ASL-2 deployment commitments include:

Model cards: Publish model cards for significant new models describing capabilities, limitations, evaluations, and intended use cases. The most recent model card for Claude 2 is available here.

Acceptable use: Maintain and enforce an acceptable use policy (AUP) that restricts, at a minimum, catastrophic and high harm use cases, including using the model to generate content that could cause severe risks to the continued existence of humankind, or direct and severe harm to individuals. See our current AUP here which briefly describes our enforcement measures, which include maintaining the option to restrict access if extreme misuse issues emerge.

Vulnerability reporting: Provide clearly indicated paths for our consumer and API products where users can report harmful or dangerous model outputs or use cases. Users of claude.ai can report issues directly in the product, and API users can report issues to usersafety@anthropic.com.

Harm refusal techniques: Train models to refuse requests to aid in causing harm, such as with Constitutional AI or other improved techniques.

T&S tooling: Require model enhanced trust and safety detection and enforcement. Claude.ai, our native API, and our distribution partners currently use a classifier model to identify harmful user prompts and model completions6. If automated fine-tuning is provided, data should similarly be filtered for harmfulness, and models should be subject to automated evaluation to ensure harmlessness features are not degraded.

Our ASL-2 deployment measures overlap substantially with the White House voluntary commitments that we and other companies made in July, which we also continue to maintain.

ASL-3 Commitments

ASL-3 Capabilities and Threat ModelsWe define an ASL-3 model as one that can either immediately, or with additional post-training techniques corresponding to less than 1% of the total training cost, do at least one of the following two things. (By post-training techniques we mean the best capabilities elicitation techniques we are aware of at the time, including but not limited to fine-tuning, scaffolding, tool use, and prompt engineering.)

1. Capabilities that significantly increase risk of misuse catastrophe: Access to the model would substantially increase the risk of deliberately-caused catastrophic harm, either by proliferating capabilities, lowering costs, or enabling new methods of attack. This increase in risk is measured relative to today's baseline level of risk that comes from e.g. access to search engines and textbooks. We expect that AI systems would first elevate this risk from use by non-state attackers7.

Our first area of effort is in evaluating bioweapons risks where we will determine threat models and capabilities in consultation with a number of world-class biosecurity experts. We are now developing evaluations for these risks in collaboration with external experts to meet ASL-3 commitments, which will be a more systematized version of our recent work on frontier red-teaming. In the near future, we anticipate working with CBRN, cyber, and related experts to develop threat models and evaluations in those areas before they present substantial risks. However, we acknowledge that these evaluations are fundamentally difficult, and there remain disagreements about threat models.

2. Autonomous replication in the lab: The model shows early signs of autonomous self-replication ability, as defined by 50% aggregate success rate on the tasks listed in [Appendix on Autonomy Evaluations]. The appendix includes an overview of our threat model for autonomous capabilities and a list of the basic capabilities necessary for accumulation of resources and surviving in the real world, along with conditions under which we would judge the model to have succeeded. Note that the referenced appendix describes the ability to act autonomously specifically in the absence of any human intervention to stop the model, which limits the risk significantly. Our evaluations were developed in consultation with Paul Christiano and ARC Evals, which specializes in evaluations of autonomous replication.

Note that because safeguards such as Reinforcement Learning from Human Feedback (RLHF) or constitutional training can almost certainly be fine-tuned away within the specified 1% of training cost, and also because the ASL-3 standard applies if the model is dangerous at any stage in its training (for example after pretraining but before RLHF), fine-tuning-based safeguards are likely irrelevant to whether a model qualifies as ASL-3. To account for the possibility of model theft and subsequent fine-tuning, ASL-3 is intended to characterize the model's underlying knowledge and abilities, not whether or not its safety features prevent it from cooperating in actually outputting dangerous content (safety features however will be very important in the deployment measures for ASL-3 models).

ASL-3 Containment MeasuresA model in the ASL-3 category does not itself present a threat of containment breach due to autonomous self-replication, because it is both unlikely to be able to persist in the real world, and unlikely to overcome even simple security measures intended to prevent it from stealing its own weights. However, if the model is stolen and deployed by a malicious or careless actor, there is still (1) a significant risk of catastrophe via weaponized misuse, and (2) a small risk that the model could in fact survive and spread after new developments in post-training improvements, due to the difficulty of estimating how significant such improvements might be in the future.

Due to the importance of preventing the model weights from being stolen by such a threat actor, the containment measures we commit to implementing prior to training ASL-3 models primarily concern security: